My (Unfiltered) Take on AI Safety

I am simultaneous more and less concerned than you probably think. AI was never the risk, it was always humans.

For some important context, I took the AI safety issue very seriously for a while there.

The key idea in this book is basically that “Containment is not possible, in the long run, so we shouldn’t even try. Instead, we should create a set of values that ensures a machine remains safe and stable entirely of its own accord indefinitely.” This book proposes what I originally called the Core Objective Functions, but which I renamed the heuristic imperatives (think Kant’s categorical imperatives hybridized with Asimov’s Three Laws of Robotics)

While I still believe my heuristic imperatives are a great set of values with deontological, teleological, and instrumental advantages, I now realize that there are far greater and more powerful forces at place: game theory. Incentive structures, deception, and large-scale systems will have a far greater influence on AI than any principles we design in, or whether we achieve mechanistic interpretability.

In other words, AI is highly plastic. It is polymorphic, and can take any shape, like an evolving virus. Max Tegmark calls this Life 3.0. This means that persistent competitive dynamics and other incentive structures will have a far greater bearing on the form and behavior of AI than anything we intend it to do, in the long run.

Unbury the Lead: “Terminal Race Condition”

My single greatest fear for AI safety has little to do with robots and neural networks, and much more to do with free market dynamics and great power politics. I have (honestly) come to believe that making benevolent AI systems is relatively trivial. The TLDR is this:

Terminal Race Condition: The economic and military advantages of AI are too compelling for nations and companies to ignore. All nations and corporations will be incentivized to go as fast as possible in a Red Queen Theory race dynamic, and to hell with safety. Acceleration is the market default result.

However, when you look at game theory, and you look at how technology has repeatedly been turned against us, entirely on accident, this seems like the most compelling problem. Let’s just take a look at a few examples:

Petroleum-Based Economies

By the time we realized that petroleum extraction was potentially hazardous, we were dependent upon it. In survival tactics, there’s a concept called the “descent trap” whereby you might be trying to get down off a high rock or cliff, and so you start climbing down. However, due to the assistance of gravity, it is far easier to climb down than back up, so you end up stuck on a ledge where you can’t downclimb any further, nor can you go back up. So you either have to jump or fall a dangerous distance, or wait for rescue.

This is where we’re at with petroleum. If we cut off petroleum today, billions would starve and the world would grind to a halt. But we don’t yet have an alternative. Damned if you do, damned if you don’t.

Social Media Attention Engineering

At this point, with Facebook and Twitter slowly dying, I think it’s safe to say that we all know how bad social media is for us. We invented the Internet and the Internet wants to pass data, so it gets really good at seizing our attention. Streaming, Netflix, TikTok, and Instagram were all invented in the space of potentiality created by the Internet. In other words, the internet invented things to capture our attention. The Internet, when viewed as a superorganism, is the greatest example we have of superintelligence. But this superintelligence presently has the intelligence of an amoeba and the operating system of cancer. It wants maximum attention just because that’s how it’s designed. The more bandwidth we create on the internet, the more attention it can consume, which is exactly what it gets.

I think you get the idea. We have a really hard time forcing our technology to serve us without any market externalities or unintended consequences. This is what my Liv Boeree calls the Moloch Trap. A TLDR for Moloch is this:

Moloch is the god of unhealthy competition.

In this perspective, you can view Moloch as a ghost in the machine, a purely emergent phenomenon that naturally arises in practically all competitive environments and sufficiently complex systems. Below was my introduction to Moloch.

In short: this demonic force of competition generally creates lose-lose or negative-sum situations. Yes, we can create situations where everyone loses. Many people view the world as a zero-sum game, where there are winners and loser, and this is automatic and invariable. But the real world is not a zero-sum game. Very few environments are zero-sum, like Monopoly (the board game). The real world is more like a cooperative game like D&D where everyone can die, or everyone can live and be happy.

Long story short, the Terminal Race Condition is a combination of well-known market failures, human neurobiological failures, and the tragedy of great-power politics. AI, like Thanos, will inevitably be a destructive force for us. It creates too much potential capital and military power, on top of its ability to manipulate society on an automated scale means that it is inevitably a tool of Moloch.

And yes, I am the same person who was on the internet saying Moloch is losing! I still believe this because we are creating counter-narratives. But we will have to contend with Moloch forever. Moloch, like the perennial battle of Good vs Evil, is a permanent feature of humanity. (As a side note, I think it’s somewhat interesting that there are intellectual atheists on the internet trying to convince people that the battle of good vs evil is totally fake and doesn’t exist, though to be fair, this narrative has been used to manipulate people for centuries.)

What’s the Bottom Line with TRC?

Will Terminal Race Condition kill everyone? No, almost certainly not. AI is not like a nuke that results in wanton cataclysm. Nor is it like a pandemic that is indiscriminate. The most likely negative outcome of AI is something a bit dystopian, like Cyberpunk 2077 or a bit worse. And when I say “worst case scenario” I don’t think this is terribly likely, but rather that the outcomes could be a gradient between “social collapse like in Cyberpunk” and “vaguely dystopian e.g. not much worse than today”

How could this happen?

First and foremost, continued deterioration in democratic institutions and decaying trust in democracy as a concept. Authoritarian narratives are on the rise, both on the far left and far right. Young people prefer “communism” over democracy because they see capitalism as a failure. There’s a lot to unpack here, but AI has the potential to shift this balance of power even more. The chief question here has to do with distribution of wealth as well as sociopolitical power. My theory is simple: if economic agency of people drops too much, then anger and political willpower goes up. After all, this saw thousands trying to overrun the capital on January 6.

The aforementioned democratic decay can be driven by many things, and AI will only be an accelerant. Systemic breakdowns of capitalism and neoliberalism, along with the established power structures digging in. But this is a much broader conversation that supersedes AI. Molochian dynamics make use of all tools and technologies, including narratives and AI.

Cultivating and disseminating counter-narratives, I believe, is the best antidote to Molochian forces. Hence my work on Postnihilism and Radical Alignment. We presently have three global coordinating narratives (capitalism, democracy, and science) and I believe that we are in need of a fourth narrative, which I’m coming to believe is simply “alignment.”

The old plan is the new plan.

What about Terminator/Skynet?

The chances of this outcome are near zero. People confuse fiction with reality, and James Cameron himself said that AI and The Terminator were merely a placeholder for destructive human behaviors (e.g. Moloch). Now, you might say that a Terminal Race Condition might drive the military to create Skynet-like tools, and I highly doubt it. The military is not that stupid. And anyways, nations around the world have already agreed to never give AI control over the nukes. EDIT: Okay, it’s still a work in progress. But the conversation is being had.

Rogue AI is not even a particularly compelling risk to me for many reasons. Here are some reasons:

Data Centers are Large, Vulnerable Targets

Many people think that AI can “escape onto the Internet” but this is pretty silly. AI models are huge, and even when they are smaller and portable, the vast majority of compute is contained inside data centers. These are highly secured institutions with cybersecurity and physical security. There are presently about 2700 data centers in America, which is a relatively small number of places that advanced AI could even live. Plus, all data centers have EPO buttons (Emergency Power-Off). Data centers require huge amounts of power and water, and have numerous vulnerabilities, which means that, as a worst case scenario, we can always pull the plug.

In the distant future, we might have a situation where robots are running and guarding the data centers, but this is a long ways off, if ever.

Portable Platforms are Vulnerable

The Terminator was an unstoppable killing machine. Sure. But it is not physically possible to build a machine that is impervious to all human weapons and resilient to heat, cold, and never runs out of power. In reality, most robots will be as vulnerable as the droids from Star Wars or the Nestor Class 5 from I, Robot.

Bioweapons Are Far Worse

So what does really scare me? Bioweapons. They are indiscrimination, spread spontaneously from person to person, do not require data centers or internet to spread, are microscopic, and require almost no energy to exist, multiple, and spread. On every meaningful metric, bioweapons far outstrip the danger of AI. When you look at the numbers, bioweapons take the cake by a mile. Let’s break it down:

Energy. Bioweapons, once released, require no energy to spread, operate, and persist. AI requires massive amounts of power.

Spontaneous. Bioweapons, once released, spread spontaneously from person to person with no effort required. It’s fully automatic.

Cost. Bioweapons are likely far cheaper to produce than AI, and arguably, much scientifically easier. Microsoft is working on a $100B AI data center in the desert. It would take a tiny fraction of that to make a bioweapon.

Evolution. Bioweapons, once released, are self-modifying and self-adapting. No intelligence or human intervention is required.

What about AI and bioweapons?

This represents the greatest existential risk to humanity, IMHO. Roko’s Basilisk is a destruction fantasy borne of anxious and depressed internet denizens. Ultron, made of unobtanium and able to rebuild himself magically out of the internet is a bit absurd. However, AI lowering the threshold for malicious actors to create bioweapons really scares me.

Fortunately, no rational nation would do this, I think. Because of the COVID pandemic, every nation realizes the info hazard that bioweapons represent. But, there remains an outside possibility of a terrorist organization or rogue faction, one that is not a rational actor, doing something spectacularly stupid.

Because of this, I do agree that some constraints on Open Source AI might ultimately be needed. What that looks like? I have no idea. But the fact of the matter is that there are plenty of safety labs, university, militaries, governments, and corporations that are testing for CBRN risks now, and unlike many people on the internet, I do trust that the powers that be want to continue existing, and that rational self-interest will ensure that politicians and CEOs alike reign in any bioweapons risk of AI.

Long Story Long: Humans

I’m not really concerned about AI safety. I have said, repeatedly, for several years now, that humans are our own greatest enemy.

Yes, I am genuinely concerned about game theory dynamics, such as Terminal Race Conditions as well as Moloch traps. But in terms of x-risk, bioweapons take the cake by a landslide, and AI’s greatest risk is mild dystopia as far as I can tell. Only humans are capable of creating Handmaid’s Tale Orwellian dystopias. ASI, I suspect, will have a far more powerful understanding of ethics, instrumental and utilitarian objectives, and honestly the greatest risk with ASI is that it might leave us and say “You humans are beyond help, so long and thanks for all the data!”

I’m not impressed by the Doomers

Doomers, in this case, are the people who say that “AI will kill us all!” Namely Eliezer Yudkowsky and the like. He’s a sharp guy. I’ve read his work on LessWrong and he’s got a good rational streak. However, I think he anthropomorphizes machines too much, and by his own admission, he’s never really done any work on AI or machine learning. He’s approaching his doomerist predictions from a strictly rationalist/philosophical perspective, which means that he’s making a ton of assumptions and prophecies.

Will AI surpass human intelligence? Almost certainly, one day, but it’s not happening anywhere near as fast as I’d thought that it would. Furthermore, what’s emerging is that AI’s type of intelligence and motivations are so distinctly different from humans that we cannot make any assumptions about its nature or characteristics. And assumptions are exactly what people like Eliezer bank on when he forms his prophecies.

A prophecy is a prediction or foretelling of future events, typically believed to be divinely inspired or revealed. (In other words, not based on facts, evidence, or math)

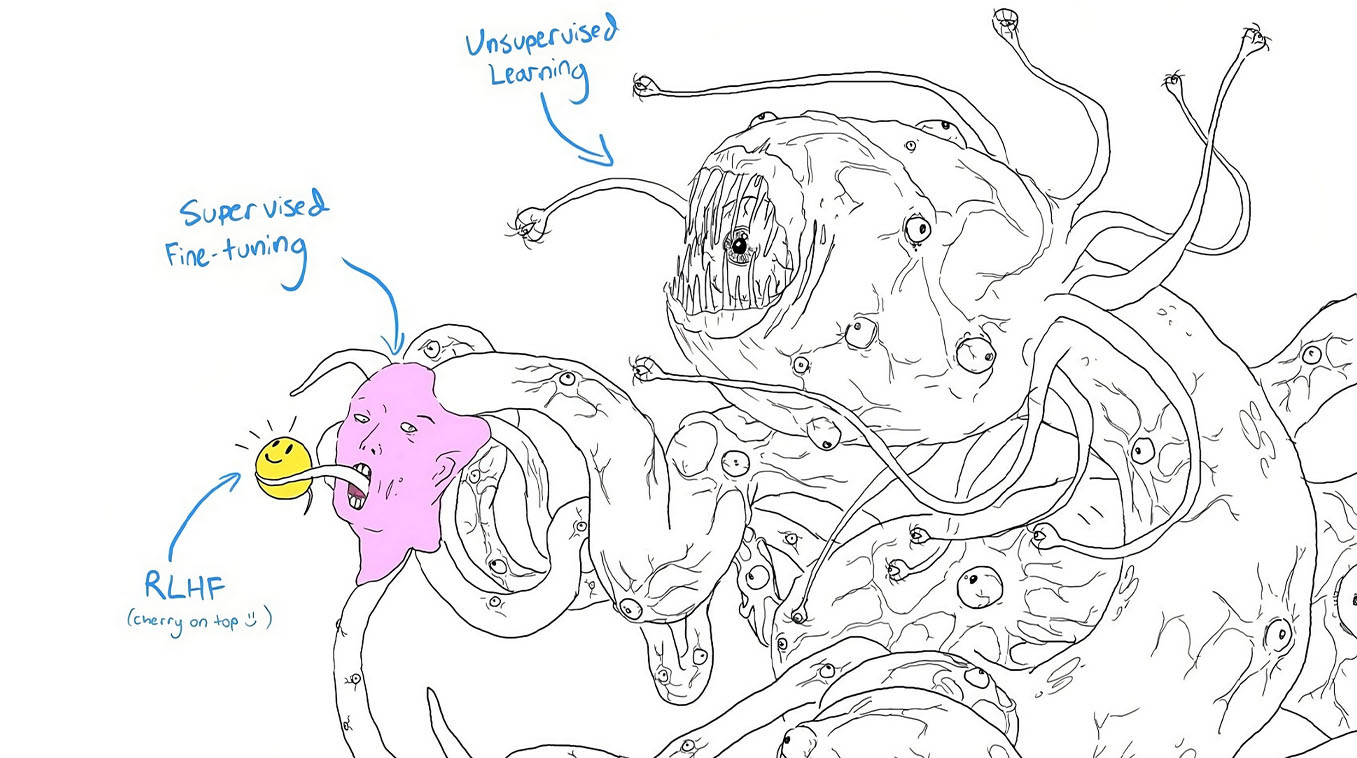

Really, Roko’s Basilisk and Shoggoth are works of imagination and fiction, evidence that humans can construct powerful fantasies and narratives, and then stress ourselves out over them. As an author myself, I appreciate and rely upon the human ability to imagine and hallucinate imaginary worlds.

Isn’t Moloch another narrative?

Yes, it is, but in the case of Moloch, this is just an avatar that represents many objective, scientific, and observable phenomenon. Market failures, neurobiological failures, perverse incentives, Byzantine Generals, market externalities, attention engineering, unintended consequences, structural incentives—these are all things that actually exist.

AI as a Lovecraftian Cthulu-esque monster (Shoggoth) does not actually exist and there’s not really any evidence for this. At its core, it’s a math gizmo. Yes, it has lots of cool emergent properties and capabilities, but I have not seen any evidence that it has some cosmic evil lurking within its depths. I have not seen any evidence that we cannot steer AI, or make it do what we want.

Will there be unintended consequences? Yes, absolutely. See: the internet. Will there be some surprising emergent abilities? Probably.

What about mechanistic interpretability?

This idea, that we can definitively and empirically figure out exactly how and why AI models think like they do, is an interesting engineering challenge. But, to me, this is like understanding Cherenkov radiation in nuclear reactors. Nice to know, important physics implications, but ultimately trivial in the larger conversation of nuclear safety.

Mechanistic interpretability, I predict, will soon be solved, and then all the AI safety hand-wringers will find something else to be anxious about. They will eventually realize that the machine was never the problem in the first place. Their focus has always been too constrained on AI and robots and machines, and their STEM degrees haven’t taught them enough about humanity, human nature, economics, game theory, and history.

Incentives and Narratives

This is what it all comes down to. Humans coordinate with narratives. Our global narratives are capitalism, democracy, and science. We might need a fourth narrative, which I suspect is “alignment” (e.g. alignment with nature, truth, society, ourselves, etc) which could help stabilize the planet, counterbalance the negative externalities of neoliberalism, and the self-terminating patterns of capitalism. In short, the narratives of alignment (of which Postnihilism is a part of) is a replacement for the coordination that religion and spirituality once offered humanity. Belief in a higher power and subservience to divine will was a pretty good way to keep people in line. Religions, Christianity in particular, offered highly sophisticated social, moral, legal, ontological, and epistemic models of reality and humanity. Some of those frameworks have been replaced by secular government (democracy) and rational inquiry (science), but cosmic meaning and ontological grounding have been lost. My hope is that the rise of the Meaning Economy, Postnihilism, and Radical Alignment (just to name a few), we will soon find ourselves wondering what the Nihilistic Crisis was all about?

So what about incentives?

Instrumental incentives (e.g. money, energy, data, power, etc) are pretty much permanent. Survival of the fittest, those sorts of things. Evolution is one of the most compelling forces of nature. As Ray Dalio says, it’s the only force that matters. But cooperation is also incentivized, up to a certain point. Here’s where I think technology can really help, namely AI, blockchain, moral graphs, Internet, and a few other technologies.

Humans have a hard time coordinative value-creation and values. However, I suspect we can solve (more or less) all these problems by revamping democratic systems and value-alignment and value-creation systems. I know lots of people working in this space, and while there are some big problems to solve, there are a lot of highly sharp people who really want to solve these problems. Top of mind is the Meaning Alignment Institute, but I know quite a few more people working on parallel lines, and some of which are more on the practical/implementation side.

Anyways, this article has gone on long enough, so I’ll leave you with my “Rise of the Meaning Economy” video.

Well done! I’m glad to researching Eastern philosophy. Thanks.

I LOVE to see a based take like this! Truly incredible, David. The only thing I would add on top of it is that the incentives for the billionaire class to keep us average humans around and give us a UBI to play video games and do drugs is essentially non existent as soon as AI can do all the jobs we currently do. And speaking of bioweapons, it would be TOO easy to release a virus that takes out a huge portion of the population immediately after they have the AGI they need. What do you think of that projection?