My Hypothesis about OpenAI's Hard Pivot

Why did Ilya and Jan leave? Why did Sam get in bed with the Pentagon?

This entire post is simply speculation about Sam Altman’s reasoning for steering OpenAI in the direction he has.

TLDR

To state it plainly in a nutshell, I suspect that Sam Altman believes a few things:

That we have a much longer runway until AI or AGI becomes an existential threat to humanity AND/OR that the threat profile is not catastrophic/exfiltrated AI (e.g. the Skynet scenario)

That the true threat is actually humanity (always was)

That AI alignment and safety people are sanctimonious and superfluous

THEREFORE

It’s okay to let the purists and safety people go, and abolish the Super Alignment team

It’s okay to pivot hard to be totally for-profit

It’s okay to get in bed with the Pentagon

CONTEXT: Recent Events at OpenAI

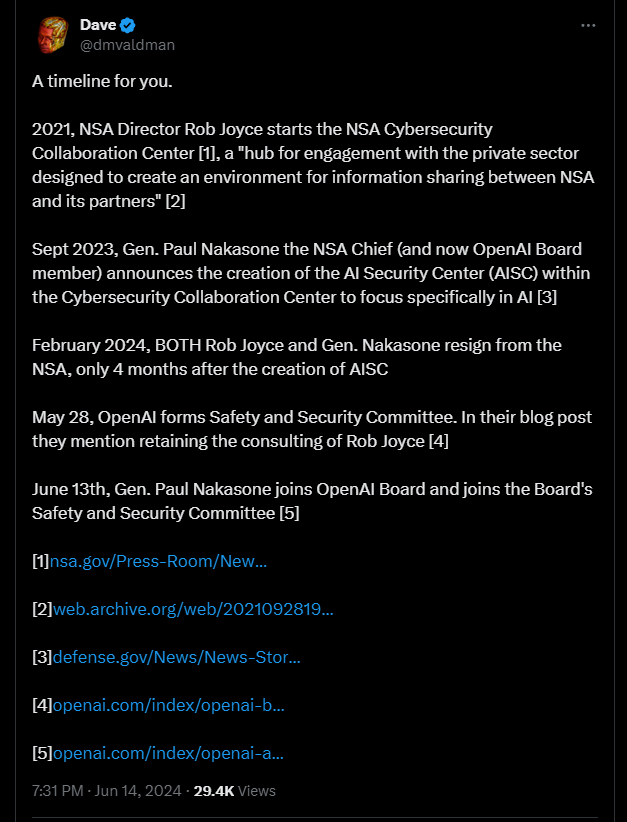

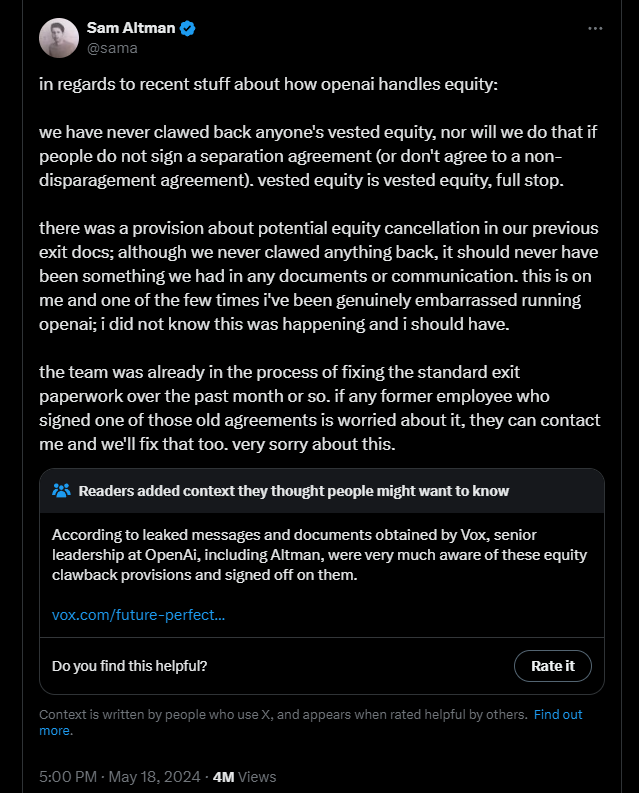

At a very high level, some interesting things have been going on at OpenAI. Ilya Sutskever and Jan Leike have both departed, along with a handful of other people. Then, there was the “oopsie daisy” of a rather toxic clawback clause for departing OpenAI employees that Sam Altman was “embarrassed” by (but signed off on). To make matters even worse for Sam, there are also rumors that he was willing to sell out to China or Saudi Arabia to start a bidding war for AGI.

Sources:

https://x.com/dmvaldman/status/1801759288572186840

https://x.com/aaronpholmes/status/1801785687030829240

https://x.com/sama/status/1791936857594581428

https://futurism.com/the-byte/former-openai-employee-agi-bidding-war

“To create safe AGI for the benefit of all humanity”?

Sam and OpenAI have stuck by their guns, saying that their goal is nothing short of safe AGI for the benefit of all. Now, some of this is rumor and some of this is straight from the horse’s mouth.

There seems to be some logical or ethical inconsistencies here. First, OpenAI has been much maligned as anything but “open.” Elon Musk has sued OpenAI, but the suit has since been dropped after OpenAI published some emails publicly. A very odd tack, but apparently someone inside OpenAI decided it would be better to publicly shame Elon rather than settle quietly with mediation. But Elon got his wish, OpenAI admitted that the “open” was never meant to be Open Source, it was meant to be “open, as in, available to everyone, but we control it.”

If it sounds like a Messiah narrative…

The feeling out of OpenAI seems to be this:

We, and only we, have the ability to save humanity! Stand back citizens!

This is further evidenced by Sam Altman’s past claims that AI was going to generate $100T and that OpenAI was “going to capture most of it” during an interview back in 2022 (I apologize, I cannot find the original interview).

Sticking to the Hard Facts

While there is a lot of speculation and rumor, we really only need to look at the hard facts and principle sources.

Sam Altman was briefly ousted from OpenAI for being “less than candid”

Jan Leike (safety) and Ilya Sutskever (research) left OpenAI under less-than-amicable circumstances. Jan said that the culture in OpenAI has changed, and people are chasing shiny new products rather than safety research and other scientific objectives.

OpenAI has a shiny new safety board populated with folks from the NSA

Web 2.0 Mentality

Sam Altman came up at Y Combinator in the middle of the Web 2.0 revolution. He’s approaching AI products with an A/B testing mentality as well as the philosophy of “move fast and break things.”

Last summer, before all the grief with Ilya blew up, there were murmurings within OpenAI that they were arguing over testing first vs releasing quickly to see what people do. Sam won that argument. Sam argued that they had no way of knowing how people would use, abuse, and exploit their models until they released them to the wild. After all, Microsoft and every game studio has been this way for a while now. It’s better to just let software products into the wild, see what breaks, and then fix it. Let your end-users be your primary testers, after all, they’ll do it for free. This is why you frequently see buggy launches of video games and extremely odd errors in new AI products.

Can you A/B test your way to AGI?

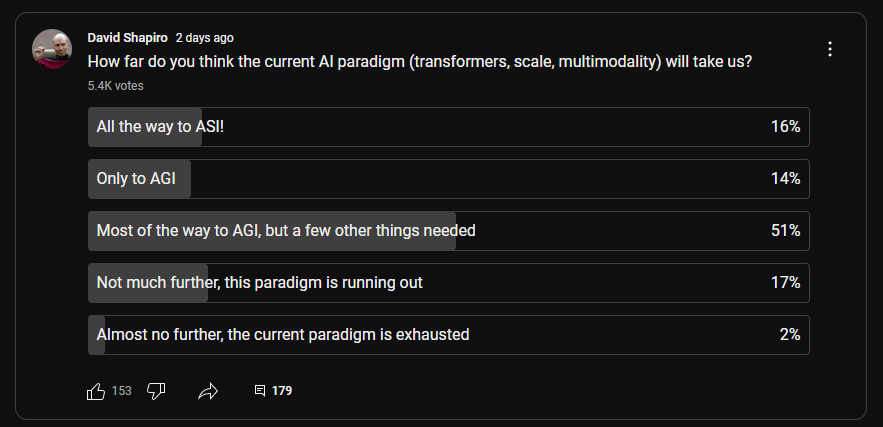

I recently made a video, though it was far from the first one, talking about how we’re likely to see diminishing returns as the current paradigm reaches maturity.

The TLDR is that there are a few trends that are conspiring to slow down AI progress:

Power constraints - AI training and GPUs are extremely power hungry, and the power grid can’t even support the demand

Data constraints - we have more or less exhausted valuable, useful data (hence why OpenAI is trying to purchase data from news agencies)

Hardware constraints - NVIDIA is building and selling GPUs as fast as possible, but you have to purchase and deploy them somewhere

Rising costs - The cost of each successive generation of AI models costs roughly 10X the previous generation. Revenue is not rising quite that fast.

The net effect is that AI is facing a lot of friction and a lot of headwinds. The current paradigm of transformers+scale+multimodality is facing diminishing returns.

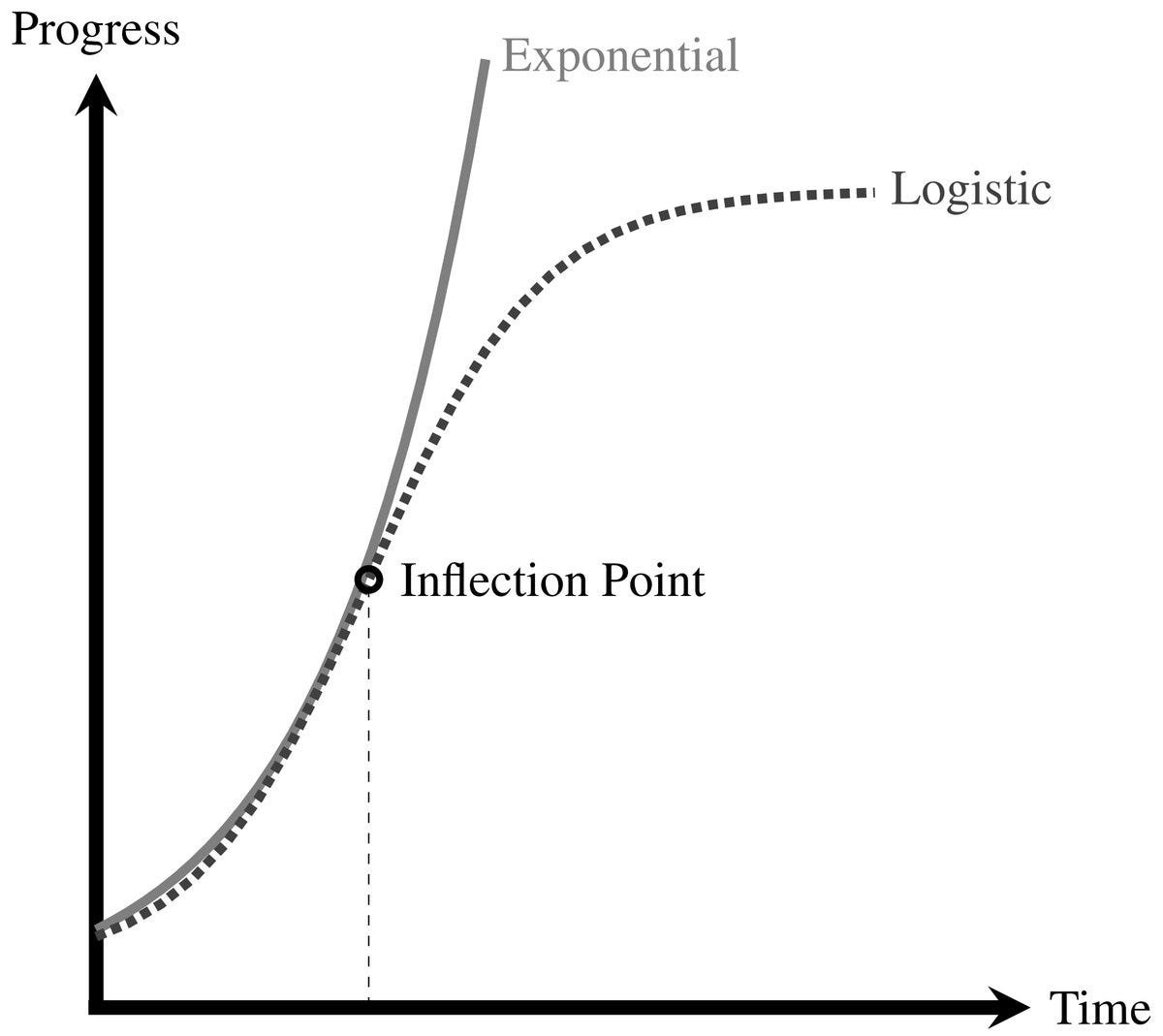

Basically, we are at a divergence point, as illustrated succinctly below:

Now, therefore, we have more time

If Sam Altman and OpenAI are paying attention to the same trends and data that I am, that Yann LeCun is, that Gary Marcus is, and a whole slew of other rationalists and insiders, then there’s pretty much only one conclusion: the current paradigm will not get us to AGI and we’ve also got some time before it gets really crazy.

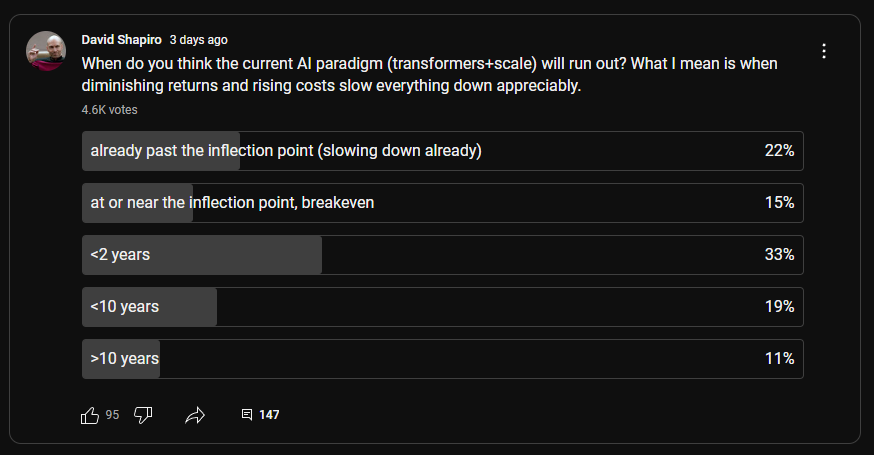

Even my audience generally agrees that the current paradigm is at or near exhaustion.

The Real Threat Profile

For the longest time, we’ve all been terrified of Skynet and Terminator. This risk profile is espoused by people like Eliezer Yudkowsky, who says that any super-intelligence will inevitably kill all humans.

I have said for a while that humans are the greatest existential threat to human existence. Our own stupidity, avarice, and belligerence are our greatest risks.

“No, Mr. Altman, I expect you to comply!” ~ The NSA, probably

Here’s how I think it went down…

Summer 2023: Arguments inside OpenAI start to get heated around the safety/release/testing cycle. Altman is in favor of rapid release and short iteration (e.g. Web 2.0 mentality). Ilya is very much in favor of slower testing cycles and more science. This is representative of two fundamentally different worldviews and technological paradigms. Altman, as a CEO/product guy knows how to launch products and A/B test. Ilya does not.

Autumn 2023: It all comes to a head. Altman is briefly ousted from OpenAI for being “less than candid” which may be a matter of perspective. Being charitable here, it’s entirely possible that he just engages in corporate doublespeak, as most CEO’s learn to do, and that more direct scientific autists like me and Ilya just find it distasteful. Around this time, Microsoft steps in and strongly encourages some better board behaviors and internal restructuring. Ilya already faced social capital punishment (e.g. credibility reduced to zero because he took a shot at the alpha). I’m honestly surprised it took him that long to leave. Shortly after the whole thing, Ilya Tweeted (then deleted) “the beatings will continue until morale improves”.

Winter 2023/24: Sam has been consolidating his power in OpenAI and working on pivoting away from the old safety paradigm. People noticed that OpenAI changed their charter so they can work with the military. He knew that it was only a matter of time before the Super Alignment team and Ilya got the message: they were done at OpenAI. A lot of management is trained to do this: you just make people so uncomfortable that they finally leave of their own volition. It’s way cheaper than firing people. This happens all the time in every large corporation, and especially tech companies.

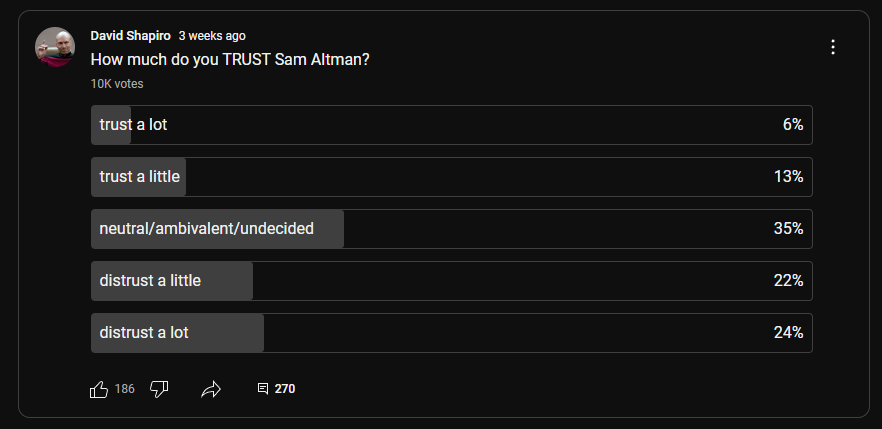

Spring 2024: Ilya and company finally depart, but Sam also gets in a lot of hot water for generally just looking more and more like a sellout. Even my audience was done with Sam before a lot of this blew up. See below:

Summer 2024: Now that the pesky, sanctimonious, and superfluous AI safety folks have left (not my opinion, just speculating on how Sam sees them) Sam can fully cooperate with the US military, switch to being for-profit, and just align with the US establishment wholesale.

Does it look shifty? Yeah. Does it look like he threw out all his values? Yeah. Is it a rational set of choices and decisions? I think so. Do I trust him more because of this? Absolutely not.

What this series of events indicates to me is that Sam has learned, the hard way, how the real world works. He had his head in the clouds with hubris and idealism, and honestly meant what he said, as a True Believer at the beginning. However, he’s learned through repeated mistakes and setbacks the same thing that a lot of us gifted people learn: sometimes the tried and true way is really just the better way. What I mean by this is corporate governance, economic pressures, and aligning with the establishment.

It’s the kind of problem where your hands are tied: you can either update your beliefs or double down. Either way, you’re damned if you do, damned if you don’t. As a public figure, a lot of people get irritated with me when I update my views based upon evidence, facts, and changing variables. Some people are impressed.

Would I rather live in a world where OpenAI is in bed with the Pentagon rather than China or Saudi Arabia? Absolutely. The whole thing reminds me of Palantir’s position of absolutely refusing to work with China.

Four star admiral John Aquilino, the commander of US Indo-Pacific naval fleets, has said the following:

China’s goals are incompatible with our way of life

If there was ever any truth to Sam Altman wanting to sell AGI to China, that is absolutely alarming, and unacceptable to me as an American citizen. I can imagine a naive Sam Altman who used to view the world through the exclusive lens of tech making such decisions. I can also imagine that such a sharp guy, who is well connected to world leaders, would also eventually pull his head out of his ass and start living in the real world, rather than his personal fantasy world where his prepper bunker will save him.

Now we know:

https://x.com/ilyasut/status/1803472978753303014?s=46

I see other examples of that same behaviour around us.

Extremely valuable skills like perseverance and grit, aligned with the wrong purpose.

Altman probably deserves to be regarded as The Incombustible Sam, but I wonder what actual goal and values are behind his figure.

Kudos to you for this analysis, David.